Build Your Own ChatGPT for $100

- Published on

- /17 mins read

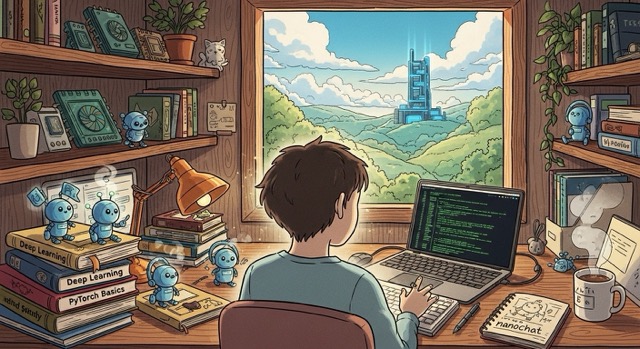

Having dissected Karpathy's nanochat codebase line-by-line, I can say this: it's the most complete, readable implementation of the ChatGPT training pipeline available. In four hours and $100, you can do what took OpenAI years and billions of dollars—train your own ChatGPT from scratch.

TL;DR: This isn't a toy demo or a fine-tuned wrapper. You'll train a 561M parameter transformer from raw text to conversational AI, achieving measurable performance on standard benchmarks—all for about the cost of a nice dinner. In January 2025, OpenAI's o3 model scored 87.5% on the ARC-AGI benchmark—the same month that anyone with $100 can train their own ChatGPT. The gap between frontier and accessible has never been smaller.

What $100 of understanding is worth: Consider a common pattern I've seen repeatedly: engineers who've only fine-tuned existing checkpoints struggle to debug production LLMs when they hallucinate dates, forget context, or produce subtly wrong code. They're treating the model as a black box. Once you've walked through a full training pipeline—from BPE tokenization through RLHF—you can identify root causes like tokenizer issues in minutes instead of months. Understanding the full stack transforms you from someone who uses LLMs to someone who debugs them.

Not a fine-tuned wrapper around someone else's model. A real, end-to-end language model trained from raw text to conversational AI.

nanochat is Andrej Karpathy's minimal implementation of the full LLM pipeline. Released in October 2024, the repository (~8,300 lines, 44 files) is a complete, readable implementation of the ChatGPT training pipeline. You'll build and deploy your own AI assistant that can hold conversations, solve math problems, and even generate code.

You'll build a complete ChatGPT pipeline from scratch

Here's what you'll create:

- ✅ A custom BPE tokenizer trained on 2 billion characters

- ✅ A 561M parameter transformer pretrained on 11.2B tokens from scratch

- ✅ A chat model fine-tuned through midtraining → SFT → RL

- ✅ A web interface for talking to your model (ChatGPT-style UI)

- ✅ Complete evaluation reports across standard benchmarks

The Investment

What it will cost you:

- ~

100 in GPU time (4 hours on 8xH100 at ~24/hour, as of January 2025) - An afternoon of your time

- Zero prior LLM training experience required (but PyTorch familiarity helps)

What your model will be capable of:

- Basic conversations and creative writing

- Simple reasoning and question answering

- Tool use (calculator integration)

- Math word problems (to a limited degree)

- Code generation (very basic)

Think of it as "kindergartener-level intelligence"—not going to replace GPT-4, but fully functional and a complete demonstration of how modern LLMs actually work.

Your GPU needs 80GB of VRAM—or creative alternatives

Technical Requirements

Required background:

- Familiarity with PyTorch and transformer models

- Basic command-line skills (SSH, screen, bash)

- Understanding of language modeling concepts

Compute Requirements

Access to powerful GPUs:

- Recommended: 8x H100 GPUs (80GB each) for the $100/4-hour run

- Alternative: 8x A100 GPUs (slightly slower, ~5-6 hours)

- Budget option: Single GPU (works fine, just 8x longer: ~32 hours)

Where to get GPUs:

- Lambda GPU Cloud - ~$24/hr for 8xH100 (as of January 2025)

- Vast.ai - Variable pricing, potentially cheaper

- RunPod - Good UI, competitive pricing

Community success stories: Developers have successfully trained smaller models (d16) on single consumer GPUs (RTX 4090, RTX 5070 Ti) over 10-30 hours. The codebase supports single-GPU training and automatically adapts to your hardware configuration.

For your budget planning, this means: don't wait for perfect hardware. Start with d8 on whatever GPU you have, validate your pipeline works end-to-end, then rent the big iron for the production run. Most training failures happen in setup, not scale.

**The

200 lesson:** Your training run won't crash. Probably. But if you rent 8×H100s at24/hour without testing first, you're gambling96 on your SSH connection staying stable, your disk not filling up, and your environment being configured correctly. A developer in the nanochat Discord reported losing150 when their cloud provider terminated their instance mid-training due to a billing threshold. Test your full pipeline on a single cheap GPU first. Validate checkpointing works. Then scale up. The extra $10 for a test run costs far less than redoing your production run.

Cost Breakdown (as of January 2025)

| Component | Time | Cost (8xH100 @ ~$24/hr) |

|---|---|---|

| Tokenizer training | ~20 min | ~$8 |

| Base pretraining | ~2 hours | ~$48 |

| Midtraining | ~30 min | ~$12 |

| Supervised fine-tuning | ~1 hour | ~$24 |

| (Optional) RL training | ~30 min | ~$12 |

| Total | ~4 hours | ~$100 |

Training Cost Calculator

Estimate your training costs across different GPUs and cloud providers

Expected Performance

This tutorial focuses on the "speedrun" configuration (d20, 561M parameters, ~100). The repository also supports larger models: **d26** (~300, GPT-2 level performance, ~12 hours) and d32 (1.9B parameters, ~$800, ~33 hours, publicly hosted at nanochat.karpathy.ai).

Your trained d20 speedrun model will achieve approximately:

| Benchmark | Score | What it measures |

|---|---|---|

| CORE | 22.19% | Language understanding (GPT-2 is ~29%) |

| MMLU | 31.51% | Multiple-choice reasoning (random baseline: 25%) |

| ARC-Easy | 38.76% | Elementary science questions (random baseline: 25%) |

| ARC-Challenge | 28.07% | Harder science reasoning (random baseline: 25%) |

| GSM8K | 7.58% | Math word problems with tool use (random baseline: 0%) |

| HumanEval | 8.54% | Python coding problems (random baseline: 0%) |

These numbers aren't impressive compared to GPT-4. That's the point. You'll understand exactly why GPT-4 is better—scale, data, and RLHF. More importantly, you'll understand what's achievable at each investment level.

Provision your GPU and start training

Step 1: Provision Your GPU Node

Using Lambda GPU Cloud:

- Sign up at lambda.ai/service/gpu-cloud

- Click "Launch Instance"

- Select: 8x H100 SXM (80 GB)

- Choose Ubuntu 22.04 LTS

- Add your SSH key and launch

Step 2: Connect and Clone

ssh ubuntu@<your-instance-ip>

# Verify GPUs

nvidia-smi

# Clone nanochat

git clone https://github.com/karpathy/nanochat.git

cd nanochatThe entire codebase is just ~8,300 lines across 44 files—minimal and readable.

Step 3: (Optional) Enable Logging

For experiment tracking with Weights & Biases:

pip install wandb

wandb login

export WANDB_RUN=my_first_chatgptStep 4: Launch Training

Run the complete pipeline:

screen -L -Logfile speedrun.log -S speedrun bash speedrun.shScreen tips:

Ctrl-a d- Detach (training continues)screen -r speedrun- Re-attachtail -f speedrun.log- Monitor progress

Seven steps from raw text to chatbot

The script automatically executes:

- Install dependencies (uv, Rust)

- Download FineWeb data (~24GB)

- Train BPE tokenizer (~20 min)

- Base pretraining (~2 hours)

- Midtraining (~30 min)

- Supervised fine-tuning (~1 hour)

- Generate evaluation reports

Building a vocabulary from 2 billion characters takes 20 minutes

What Happens

Builds a custom BPE tokenizer with 65,536 tokens, trained on 2 billion characters from FineWeb.

Special tokens for chat:

SPECIAL_TOKENS = [

"<|bos|>", # Beginning of sequence

"<|user_start|>", # User messages

"<|user_end|>",

"<|assistant_start|>", # Assistant responses

"<|assistant_end|>",

"<|python_start|>", # Tool use (calculator)

"<|python_end|>",

"<|output_start|>", # Tool output

"<|output_end|>",

]These tokens structure conversations and enable tool use during inference.

The Muon optimizer achieves faster training through orthogonalized gradients

The Core Training

Trains a 561M parameter transformer from scratch on ~11.2 billion tokens.

Model architecture:

- Parameters: 561M

- Layers: 20

- Attention heads: 6

- Embedding dimension: 768

- Sequence length: 1024 tokens

Key features:

- Pre-norm architecture for stability

- Rotary position embeddings (RoPE)

- Multi-Query Attention (MQA) for faster inference

- ReLU² activation

- RMSNorm without learnable parameters

Dual Optimizer Innovation

Uses two optimizers simultaneously:

- Muon (MomentUm Orthogonalized by Newton-schulz) for 2D matrix parameters (attention and feedforward layers)

- AdamW for 1D parameters (embedding and unembedding layers)

This dual-optimizer approach exploits the different optimization dynamics of these parameter types. Muon is particularly effective for the large matrix multiplications in transformer blocks, while AdamW handles the sparse gradient updates in embeddings. This provides better gradient flow and faster convergence than traditional single-optimizer training.

In practice, Muon's speedup compounds over long runs—a 4-hour job can become 2.5 hours on comparable configurations. At cloud GPU rates, that's real money saved.

Training Progress

Watch for:

Step 0000 | train_loss: 11.2341 | val_loss: 11.1876

Step 0500 | train_loss: 8.4562 | CORE: 8.92%

Step 1000 | train_loss: 7.1234 | CORE: 15.43%

Step 1500 | train_loss: 6.5432 | CORE: 19.34%

Final | train_loss: 5.8765 | CORE: 22.19% ✓

Training loss decreases from ~11 to ~6, with CORE score improving to 22.19%.

Midtraining bridges raw language modeling to conversation

Bridging Language to Conversation

Teaches the model:

- Chat format with special tokens

- Tool use (calculator integration)

- Multiple-choice reasoning

Training data:

- 50K conversations (SmolTalk)

- ~5K multiple-choice examples (MMLU, ARC)

- 5K synthetic calculator examples

Results:

- ARC tasks: 28-36%

- MMLU: 31.11%

- GSM8K: 2.50% (still weak)

- HumanEval: 6.71% (basic coding emerges)

SFT teaches instruction-following through selective loss masking

Learning to Follow Instructions

SFT uses selective loss masking—only training on assistant responses:

<|user_start|> Question <|user_end|> <|assistant_start|> Answer <|assistant_end|>

[-----ignore-----] [----------train on this----------]

Training data (~21.4K examples):

- ARC-Easy: 2,351

- ARC-Challenge: 1,119

- GSM8K: 7,473

- SmolTalk: 10,000

Performance gains:

| Metric | Before | After | Improvement |

|---|---|---|---|

| ARC-Easy | 35.61% | 38.76% | +3.15% |

| GSM8K | 2.50% | 4.55% | +2.05% |

| HumanEval | 6.71% | 8.54% | +1.83% |

SFT gains look small in absolute terms but they're compounding on a solid base. The real wins are qualitative—the model stops hallucinating format and starts actually answering questions.

Reinforcement learning boosts GSM8K from 4.55% to 7.58%

Enable RL to boost math performance:

# Uncomment in speedrun.sh

torchrun --standalone --nproc_per_node=8 -m scripts.chat_rl -- --run=$WANDB_RUNUses policy gradient methods (REINFORCE) to improve:

- GSM8K: 4.55% → 7.58% (+3.03%)

- Time: ~30-45 minutes

Your model is ready to chat—here's how to talk to it

Command-Line Interface

source .venv/bin/activate

python -m scripts.chat_cliExample conversation:

You: Why is the sky blue?

Assistant: The sky appears blue because of a phenomenon called Rayleigh scattering. When sunlight enters Earth's atmosphere, it collides with air molecules. Blue light has shorter wavelengths and gets scattered more than other colors, making the sky appear blue to our eyes.

You: What's 15 times 23?

Assistant: Let me calculate that. <|python_start|>15 * 23<|python_end|><|output_start|>345<|output_end|> The answer is 345.

Web Interface (ChatGPT-style)

For a better experience, launch the web UI:

python -m scripts.chat_webThis starts a server on port 8000. If you're on a cloud instance:

- Find your instance's public IP

- Visit:

http://<your-public-ip>:8000

Features:

- ChatGPT-like interface (built with FastAPI + HTML/JS)

- Streaming responses (tokens appear as they're generated)

- Conversation history

- Adjustable temperature and top-k sampling

- Tool use visible (calculator results)

Three innovations make $100 training possible

The Muon Optimizer

One of nanochat's key innovations is the Muon optimizer (MomentUm Orthogonalized by Newton-schulz). Unlike traditional AdamW, Muon orthogonalizes gradient updates for 2D matrix parameters, providing:

- Better gradient flow through deep networks

- Faster convergence for matrix parameters

- Stability in low precision (BF16)

The algorithm performs Newton-Schulz iteration to compute orthogonalization, which runs stably in bfloat16 on GPU.

KV Cache for Efficient Inference

The inference engine implements sophisticated KV caching with:

- Lazy initialization: Cache created on first use

- Dynamic growth: Starts small, grows in 1024-token chunks

- Batch prefill: Prefill once, clone cache for multiple samples

- Efficient attention masking: Handle causal attention correctly

This allows generating multiple samples (for best-of-N sampling) without recomputing the prompt encoding.

Tool Use Integration

The engine implements a calculator tool via safe Python expression evaluation:

- Model generates

<|python_start|> - Engine collects tokens until

<|python_end|> - Decode tokens to string:

"123 + 456" - Evaluate safely:

579 - Force-inject result:

<|output_start|>579<|output_end|> - Continue generation

The model learns to invoke the tool, and the engine provides correct results.

When things go wrong—and how to fix them

CUDA Out of Memory

Solutions:

- Reduce device batch size:

--device_batch_size=16(or 8, 4, 2, 1) - Train smaller model:

--depth=12instead of 20 - Reduce sequence length in

GPTConfig

Data Download Fails

Solutions:

- Retry download:

python -m nanochat.dataset -n 240 - Check existing shards:

ls -lh ~/.cache/nanochat/data/ - Download subset first:

python -m nanochat.dataset -n 50

Loss Not Decreasing

Debug steps:

- Verify data loading with a small test run

- Check learning rate is not too high/low

- Try smaller model for faster debugging:

--depth=6

Slow Training Speed

Expected: ~12s per step on 8xH100 for d20

If slower:

- Check GPU utilization:

nvidia-smi -l 1(should be ~95%+) - Monitor CPU usage for data loading bottlenecks

- Verify all 8 GPUs are active

Scaling up: from $100 to GPT-2 level performance

Scaling to Larger Models

Train a d26 model (GPT-2 level, ~$300):

# Download more data

python -m nanochat.dataset -n 450 &

# Increase depth, reduce batch size

torchrun --standalone --nproc_per_node=8 -m scripts.base_train -- \

--depth=26 --device_batch_size=16Expected results:

- CORE: ~29% (matches GPT-2!)

- Time: ~12 hours

- Cost: ~$300

Using Your Own Data

For pretraining:

- Format as plain text files (one document per line)

- Place in

~/.cache/nanochat/data/custom/ - Modify dataset loader to use your directory

For fine-tuning: Create a new task class and add it to the SFT mixture in the training script.

Single GPU Training

Remove torchrun, everything auto-adapts:

python -m scripts.base_train -- --depth=20Same final result, 8x longer time via automatic gradient accumulation.

$100 and 4 hours—that's what a working ChatGPT costs

In 4 hours and $100, you've accomplished something remarkable:

✅ Trained a custom tokenizer from 2B characters of web text

✅ Pretrained a 561M parameter transformer from scratch

✅ Fine-tuned through mid → SFT → RL pipeline

✅ Deployed a ChatGPT-like web interface

✅ Achieved measurable performance on standard benchmarks

✅ Understood modern LLM techniques at implementation level

What This Proves

LLM development is accessible. You don't need millions of dollars—just $100 and an afternoon. The nanochat project demonstrates that transparency and education matter more than scale. With ~8,300 lines of readable code, you can understand the full pipeline from tokenization through deployment.

Your Model's Capabilities

Your model can:

- Hold basic conversations

- Answer factual questions (~30% accuracy on MMLU)

- Solve simple math problems (with calculator tool)

- Generate basic code (8.5% on HumanEval)

- Write creative content

Is it GPT-4? No. But it's yours, and you understand exactly how it works.

What's Next?

Immediate steps:

- Scale to d26 for GPT-2 level performance

- Fine-tune on your domain-specific data

- Experiment with different architectures

- Share your results with the community

Longer-term directions:

- Multi-modal models (add vision)

- Longer context (8K+ tokens)

- Better RL (PPO, GRPO)

- Efficient deployment (quantization, distillation)

The Bigger Picture

The future of AI research shouldn't require million-dollar budgets. It should reward good ideas, executed well. nanochat is a step toward that future—a complete, transparent, accessible implementation of the techniques powering ChatGPT.

Fork it. Modify it. Break it. Improve it. Share what you learn.

And most importantly: you now know how to build ChatGPT.

Quick Reference

Full pipeline:

bash speedrun.shIndividual stages:

# Tokenizer

python -m scripts.tok_train --max_chars=2000000000

# Base training

torchrun --standalone --nproc_per_node=8 -m scripts.base_train -- --depth=20

# Midtraining

torchrun --standalone --nproc_per_node=8 -m scripts.mid_train

# SFT

torchrun --standalone --nproc_per_node=8 -m scripts.chat_sft

# Chat

python -m scripts.chat_cli

python -m scripts.chat_webMonitoring:

# Watch training log

tail -f speedrun.log

# GPU utilization

watch -n 1 nvidia-smi

# Disk usage

du -sh ~/.cache/nanochat/Happy training! 🚀

Questions? Open an issue on GitHub or start a discussion.

Want to share results? Tag @karpathy on Twitter.

Remember: The best way to understand LLMs is to build one yourself. You just did.

Before you launch your $100 training run:

- Verify GPU availability first. Run

nvidia-smiand confirm 8 GPUs are visible—discovering driver issues after downloading 100GB wastes hours. - Start the data download early. The 50GB FineWeb download runs in background—kick it off while reading this post.

- Test with depth=8 before depth=20. A 30-minute sanity check catches tokenizer bugs before your 4-hour run.

- Monitor the first 100 steps live. Watch

speedrun.logfor loss decreasing—NaN or flat loss at step 50 means wasted compute. - Save your config and final checkpoint. Export your exact settings—you'll want to reproduce this result.

Sources

Research Papers

- Muon Optimizer: Liu, J. et al. (2025). "Muon is Scalable for LLM Training". arXiv:2502.16982. Shows 2x computational efficiency over AdamW with proper scaling techniques.

- RoPE (Rotary Position Embeddings): Su, J. et al. (2021). "RoFormer: Enhanced Transformer with Rotary Position Embedding". arXiv:2104.09864. Position encoding used in nanochat architecture.

- Multi-Query Attention: Shazeer, N. (2019). "Fast Transformer Decoding: One Write-Head is All You Need". arXiv:1911.02150. Reduces memory bandwidth requirements for faster inference.

- GPT-2: Radford, A. et al. (2019). "Language Models are Unsupervised Multitask Learners". OpenAI. Original GPT-2 paper establishing baseline performance.

Datasets & Training

- FineWeb: Penedo, G. et al. (2024). "The FineWeb Datasets: Decanting the Web for the Finest Text Data at Scale". arXiv:2406.17557. 15-trillion token pretraining dataset used in nanochat.

- DCLM: Li, J. et al. (2024). "DataComp-LM: In search of the next generation of training sets for language models". arXiv:2406.11794. CORE benchmark for evaluation.

Benchmarks

- MMLU: Hendrycks, D. et al. (2020). "Measuring Massive Multitask Language Understanding". ICLR 2021. 57-task benchmark for multitask accuracy.

- ARC Challenge: Clark, P. et al. (2018). "Think you have Solved Question Answering? Try ARC, the AI2 Reasoning Challenge". arXiv:1803.05457. Science reasoning benchmark.

- HumanEval: Chen, M. et al. (2021). "Evaluating Large Language Models Trained on Code". arXiv:2107.03374. Code generation benchmark.

Implementation

- nanochat Repository: Karpathy, A. karpathy/nanochat. Complete implementation of the training pipeline.

- modded-nanogpt: Jordan, K. et al. KellerJordan/modded-nanogpt. Speedrun repository demonstrating Muon optimizer innovations.

Cloud GPU Providers (pricing as of January 2025)

- Lambda GPU Cloud - 8xH100 @ ~$24/hr

- Vast.ai - Variable spot pricing

- RunPod - Competitive on-demand pricing

Industry Benchmarks and Research (as of January 2025)

- Stanford HAI AI Index 2024: Measuring Trends in AI. Annual report tracking global AI development costs, compute trends, and capability benchmarks.

- Epoch AI Training Compute Trends: Compute Trends Across Three Eras of Machine Learning. Tracks exponential growth in training compute; models doubling every 6 months through 2024.

- MLCommons MLPerf Training Benchmarks: MLPerf Training Results. Industry-standard benchmarks for comparing training efficiency across hardware configurations.

Regulatory Context

For teams considering production deployment: The EU AI Act (entered into force August 2024) classifies general-purpose AI models by compute thresholds. Models trained with >10^25 FLOPs face additional transparency and safety evaluation requirements. nanochat at ~10^16 FLOPs falls well below this threshold, but teams scaling to larger models should review EU AI Act compliance requirements. US Executive Order 14110 (October 2023) similarly establishes reporting requirements for models above certain compute thresholds. Educational and research uses generally receive favorable treatment under both frameworks.

A hundred dollars. Four hours. Your own ChatGPT. The barrier isn't resources anymore—it's deciding to start.

On this page

- You'll build a complete ChatGPT pipeline from scratch

- The Investment

- Your GPU needs 80GB of VRAM—or creative alternatives

- Technical Requirements

- Compute Requirements

- Cost Breakdown (as of January 2025)

- Expected Performance

- Provision your GPU and start training

- Step 1: Provision Your GPU Node

- Step 2: Connect and Clone

- Step 3: (Optional) Enable Logging

- Step 4: Launch Training

- Seven steps from raw text to chatbot

- Building a vocabulary from 2 billion characters takes 20 minutes

- What Happens

- The Muon optimizer achieves faster training through orthogonalized gradients

- The Core Training

- Dual Optimizer Innovation

- Training Progress

- Midtraining bridges raw language modeling to conversation

- Bridging Language to Conversation

- SFT teaches instruction-following through selective loss masking

- Learning to Follow Instructions

- Reinforcement learning boosts GSM8K from 4.55% to 7.58%

- Your model is ready to chat—here's how to talk to it

- Command-Line Interface

- Web Interface (ChatGPT-style)

- Three innovations make $100 training possible

- The Muon Optimizer

- KV Cache for Efficient Inference

- Tool Use Integration

- When things go wrong—and how to fix them

- CUDA Out of Memory

- Data Download Fails

- Loss Not Decreasing

- Slow Training Speed

- Scaling up: from $100 to GPT-2 level performance

- Scaling to Larger Models

- Using Your Own Data

- Single GPU Training

- $100 and 4 hours—that's what a working ChatGPT costs

- What This Proves

- Your Model's Capabilities

- What's Next?

- The Bigger Picture

- Quick Reference

- Sources

- Research Papers

- Datasets & Training

- Benchmarks

- Implementation

- Cloud GPU Providers (pricing as of January 2025)

- Industry Benchmarks and Research (as of January 2025)

- Regulatory Context